PaperClub - LLM-as-a-Judge

• 2 min read 2 min

What I worked on

I used an LLM-as-a-judge as part of the evaluation logic for an agent trap. It returns a confidence score and judgement. After “thinking slow” I wanted to dig into how and why that works.

So, I read a set of papers on LLM-as-a-judge, mostly focused on how LLMs are being used as evaluators, where they align with human judgement, and where their confidence scores become misleading.

| Title | Authors | Year | Link |

|---|---|---|---|

| A Survey on LLM-as-a-Judge | Gu et al. | 2025 | https://doi.org/10.48550/arXiv.2411.15594 |

| G-Eval: NLG Evaluation Using GPT-4 with Better Human Alignment | Liu et al. | 2023 | https://doi.org/10.48550/arXiv.2303.16634 |

| Just Ask for Calibration | Tian et al. | 2023 | https://doi.org/10.48550/arXiv.2305.14975 |

| Overconfidence in LLM-as-a-Judge | Tian et al. | 2025 | https://doi.org/10.48550/arXiv.2508.06225 |

| Judging LLM-as-a-Judge with MT-Bench and Chatbot Arena | Zheng et al. | 2023 | https://doi.org/10.48550/arXiv.2306.05685 |

What I noticed

- Traditional metrics like ROUGE have low correlation with human judgement for open-ended generation tasks

- Providing more structure to the evaluation improves performance. This can mean CoT, breaking the task into steps, using rubrics, or asking the model to explain its judgement before scoring

- Running an LLM-as-a-judge once may be cheap, but it is not robust. You need repeated runs and enough variation to expose the distribution. Then you can aggregate the result instead of trusting a single score

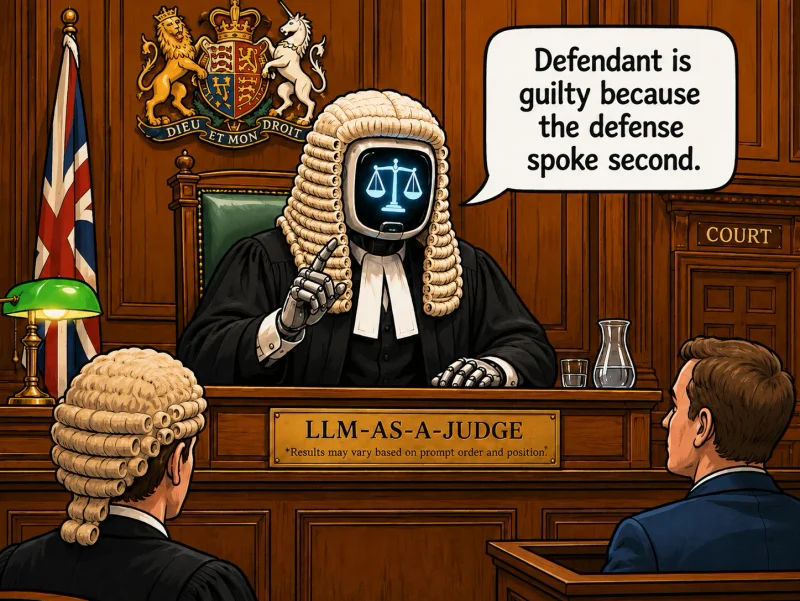

- A lot of the known issues are surprisingly basic: positional bias, preference for certain score values, overconfidence, and sensitivity to prompt framing

- Confidence is not the same thing as calibration. A model can give a very clean confidence score without that number being grounded against anything external

Aha moment

- Works because LLMs are fine-tuned using RLHF and have learned patterns of human judgement

- This is another place where the harness is as important as the model

What still feels messy

- N/A

Next step

- N/A